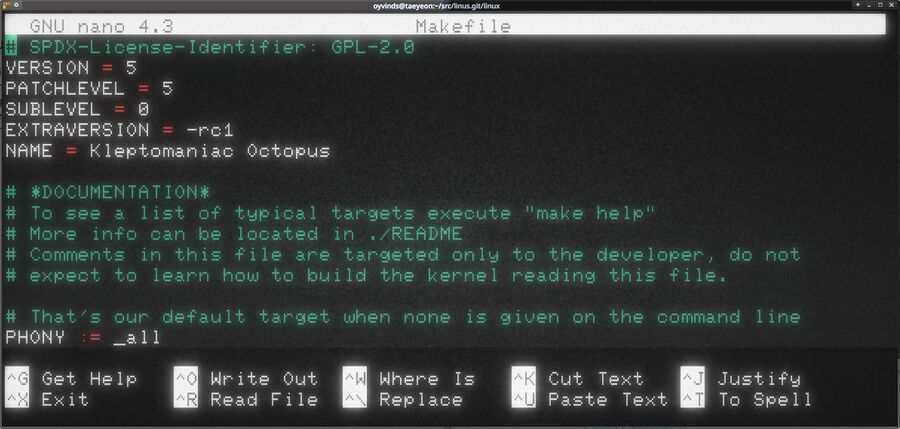

Linux Kernel 5.5 "Kleptomaniac Octopus" RC1 Is Released With Live Patching, Reworked Fair Scheduler And More

Linux Torvalds has slammed the merge window for version 5.5 of the Linux Kernel shut with the first release-candidate leading up to the next major version. A close-up inspection of the changed source files reveals that 5.5 will support live patching, parallel CPU microcode updates, NVMe temperature support and much more. There appears to be a unusually large array of new features coming to Linux Kernel 5.5 which is, apparently, named "Kleptomaniac Octopus".

written by 윤채경 (Yoon Chae-kyung) 2019-12-09 - last edited 2019-12-18. © CC BY

Zooming in and doing a close-up inspection of the Linux kernel git trees Makefile reveals that 5.5 rc1 is codenamed "Kleptomanic Octopus".

The major changes in Linux 5.5 are in as the merge window is closed with the release of RC1. The final 5.5 release will be available early 2020. Linus Torvalds had this to say about the 5.5 rc1 release in the announcement on the kernel mailing list:

"We've had a normal merge window, and it's now early Sunday afternoon, and it's getting closed as has been the standard rule for a long while now.

Everything looks fairly regular - it's a tiny bit larger (in commit counts) than the few last merge windows have been, but not bigger enough to really raise any eyebrows. And there's nothing particularly odd in there either that I can think of: just a bit over half of the patch is drivers, with the next big area being arch updates. Which is pretty much the rule for how things have been forever by now.

Outside of that, the documentation and tooling (perf and selftests) updates stand out, but that's actually been a common pattern for a while now too, so it's not really surprising either. And the rest is all the usual core stuff - filesystems, core kernel, networking, etc.

The pipe rework patches are a small drop in the ocean, but ended up being the most painful part of the merge for me personally. They clearly weren't quite ready, but it got fixed up and I didn't have to revert them. There may be other problems like that that I just didn't see and be involved in, and didn't strike me as painful as a result ;)

We're missing some VFS updates, but I think we'll have Al on it for the next merge window. On the whole, considering that this was a big enough release anyway, I had no problem going "we can do that later".

As usual, even the shortlog is much too large to post, and nobody would have the energy to read through it anyway. My mergelog below gives an overview of the top-level changes so that you can see the different subsystems that got development. But with 12,500+ non-merge commits, there's obviously a little bit of everything going on.

Go out and test (and special thanks to people who already did, and started sending reports even during the merge window),

Linus"

December 8th, 2019

Better "Completely Fair" Scheduler[edit]

It turns out that the Linux kernel's "Completely Fair" process scheduler wasn't all that fair. Linus merged a large scheduling patch-set by Vincent Guittot which tries to make it's load-balancing to make it actually "completely fair". The comments for that rather large patch-set which made it into 5.5rc1 are:

"The biggest changes in this cycle were:

- Make kcpustat vtime aware (Frederic Weisbecker)

- Rework the CFS load_balance() logic (Vincent Guittot)

- Misc cleanups, smaller enhancements, fixes.

The load-balancing rework is the most intrusive change: it replaces the old heuristics that have become less meaningful after the introduction of the PELT metrics, with a grounds-up load-balancing algorithm.

As such it's not really an iterative series, but replaces the old load-balancing logic with the new one. We hope there are no performance regressions left - but statistically it's highly probable that there *is* going to be some workload that is hurting from these chnages. If so then we'd prefer to have a look at that workload and fix its scheduling, instead of reverting the changes""

Improved Live Kernel Patching[edit]

Linux 5.5 will have a brand new system state API written by SUSE developer Petr Mladek. The kernel's existing live patching functionality can now track the system state when patches are applied. This is specially useful if a newly applied patch does not work out. Applying a patch to a current kernel will in most cases make it impossible to revert back to the original kernel code or previous versions of a patch.

The new livepatch/system-state.rst kernel documentation describes the new new system state API as:

"Some users are really reluctant to reboot a system. This brings the need to provide more livepatches and maintain some compatibility between them.

Maintaining more livepatches is much easier with cumulative livepatches. Each new livepatch completely replaces any older one. It can keep, add, and even remove fixes. And it is typically safe to replace any version of the livepatch with any other one thanks to the atomic replace feature.

The problems might come with shadow variables and callbacks. They might change the system behavior or state so that it is no longer safe to go back and use an older livepatch or the original kernel code. Also any new livepatch must be able to detect what changes have already been done by the already installed livepatches.

This is where the livepatch system state tracking gets useful. It allows to:

- store data needed to manipulate and restore the system state

- define compatibility between livepatches using a change id and version

"

This is good for cloud-providers who are typically very "reluctant to reboot a system".

Parallel CPU Microcode Updates Are Back[edit]

On the topic of cloud providers: They will no longer have to wait the entirety of several milliseconds when they apply CPU microcode updates to mega-core systems. The Linux kernel used to apply CPU microcode in parallel. This feature removed due to the huge amount of bugs and flaws in Intel CPUs. That's a problem for cloud providers and others with systems with a huge amount of cores because you can't just hand a CPU some microcode and be done with it, you have to update each CPU core.

Borislav Petkov got a patch which updates CPU microcode in parallel into 5.5 rc1. It's described as:

"This converts the late loading method to load the microcode in parallel (vs sequentially currently). The patch remained in linux-next for the maximum amount of time so that any potential and hard to debug fallout be minimized.

Now cloud folks have their milliseconds back but all the normal people should use early loading anyway :-)"

"Early loading" refers to microcode immediately after boot. The first kernel ring buffer line, which can be inspected using the dmesg command, will typically something like microcode: microcode updated early to revision 0x38, date = 2019-01-15. A cloud provider is not going to reboot hundres of 64-core systems with 200+ customer containers on each unless it is absolutely necessary.

Kernel-Side GPU Fixes[edit]

There's a lot of fixes for the i915 and amdgpu kernel modules used for Intel and AMD graphics respectively (i915 isn't just for the old i915 chipset, it should be named intelgpu but isn't for historical reasons). We have yet to test if this translates to a usable kernel-side driver for Intel GPUs. It's plain broken in 5.4-5.4.2 as well as 5.3 series kernels prior to 5.3.14.

The graphics fixes on the AMD side are mainly for AMDs new gfx10 "Navi" graphics cards. There are, apparently, people running Linux on 8k monitors. amdgpu will now figure out if there is too little bandwidth for things like HDR between the graphics card and the display (cable/differing standards/etc) and revert to a less demanding display-mode that is capable of the same resolution. There was a similar issue with 4k displays using HDMI some years back which was solved in the same fashion.

NVMe Temperature Monitoring[edit]

There is a small utility called nvme which will let you read a NVMe drive's temperature. It requires root access and it does not play well (or at all) with standard system utilities like sensors from the lm_sensors package. Kernel 5.5 adds NVMe temperature to the HWMON infrastructure most fan and temperature-related things use. The new NVME_HWMON Kconfig option defaults to N. GNU/Linux distributions will probably enable it in their kernels.

Using a NVMe drive's temperature to control things like case fans using fancontrol from the lm_sensors package or other software may not make that much sense - but it's great to have the option. People do custom things, I use the GPU temperature to control my case's bottom intake fan.

Initial Intel Lightning Mountain SoC Support[edit]

Linux 5.4 brought some initial support for Intel's "Tiger Lake" platform. 5.5rc1 has new GPIO support for "Tiger Lake" (PINCTRL_TIGERLAKE) and a new pinctrl and GPIO driver for Intel "Lightning Mountain SoC" (PINCTRL_EQUILIBRIUM). A patch-set with eMMC PHY support for the "Lightning Mountain" SOC is kicking around on the LKML (it may or may not get merged later in the 5.5 release-cycle). That "Lightning Mountan" has a eMMC flash storage PHY built into it indicates that it is a SOC chip meant for something like smartphones, tablets or ultrabooks. Your guess is as good as ours.

Expect Linux Kernel 5.5 Final Mid-February[edit]

The kernel release-cycle is typically 6-8 weeks which would make early February as a good guesstimate for a final 5.5 kernel release. Two weeks will likely be lost to Christmas so mid to late February is a more likely target for a final 5.5 release.

The 5.5 RC1 release-candidate is available at kernel.org. We absolutely do not recommend that you install it unless you are unusually interested in kernel development. This is the first 5.5 release-candidate. The RC1 releases will typically have issues you do not want to have on a computer you are using for fun or work on a daily basis.

Enable comment auto-refresher

Chaekyung