Google Is Working On A New And Possibly Better LRU Memory Management Framework For The Linux Kernel

Linux loves to fill all available system memory with all sorts of caches. Memory used by caches needs to be freed if a program needs it. That task is handled by the Linux kernels Least Recently Used (LRU) framkework. Google is now proposing a new and, in some cases, better way to decide what should and shouldn't be thrown out of memory in case an application needs it.

written by 권유리 (Kwon Yu-ri) 2021-04-16 - last edited 2021-04-16. © CC BY

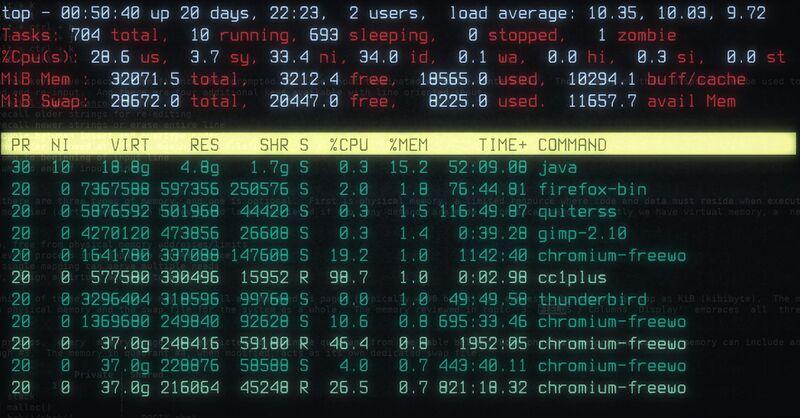

top showing memory and process information. The 10294 MiB used for "buff/cache" is filled yet it is available to be used by system applications if they need it. The Linux kernels LRU framework decides what gets thrown out and when.

The linux kernel loves to fill all available RAM with caches of files and other items. This speeds things up because memory caches are much faster than Direct I/O. One obvious bi-effect is that all system RAM can be in use and, at the same time, be free as in available for applications who may need it.

The Linux kernels Least Recently Used (LRU) framework decides what gets kept and what gets thrown out in case some system memory is needed by an applications. It covers the majority of a machines memory pages. Slab caches and a few other caches are exceptions. LRU pages are put in one of two linked lists with active or inactive pages. Pages taken from the end of the inactive list are freed unless it has the reference bit set. Pages are, in that case, moved to the beginning of the active page list and the reference bit is cleared. Dirty pages, as in pages that should be written to disk, are put in the writeback queue and later moved to the beginning of the inactive memory list. Memory pages that are unreferenced and clean get reused.

Google's Yu Zhao is currently proposing a brand new way of doing LRU with a series of 16 patches titled Multigenerational LRU Framework. The stated motivation behind it is:

"The current page reclaim is too expensive in terms of CPU usage and often making poor choices about what to evict. We would like to offer an alternative framework that is performant, versatile and straightforward."

by Yu Zhao (Google), April 13th, 2021

The new Multigenerational LRU Framework is not based on the current, it is all brand new. Google tried and gave up on improving the existing code:

"We have tried but concluded the aforementioned problems are fundamental, and therefore changes made on top of them will not result in substantial gains."

by Yu Zhao (Google), April 13th, 2021

Google's approach is to mark memory pages with generation numbers and use frequent differential scans to check what memory pages have been referenced since the last scan.

"The notion of generation numbers introduces a quantitative approach to memory overcommit. A larger number of pages can be spread out across a configurable number of generations, and each generation includes all pages that have been referenced since the last generation. This improved granularity yields relatively low false active/inactive rates."

by Yu Zhao, April 13th, 2021

This approach seems sound, and it may turn out to be a huge improvement even though Google's stated motivation for writing it is ludicrous and far beyond ridiculous:

"Over the past decade of research and experimentation in memory overcommit, we observed a distinct trend across millions of servers and clients: the size of page cache has been decreasing because of the growing popularity of cloud storage."

by Yu Zhao (Google), April 13th, 2021

It is true that people stuck with a cheap and weak notebook with 4 GiB memory that is running ChromeOS will not have a huge page cache, there isn't room for one in such a tiny amount of memory. It is also true that someone running ChromeOS will likely be using someone else's storage device (a.k.a "cloud storage") instead of local storage. There aren't that many alternatives if you have a computer with a total of 32 GiB storage space soldered onto the motherboard with no way to expand it. Android devices have similar limitations. Real desktop and laptop computers running fully featured GNU/Linux operating systems will typically have a lot more memory and storage. It is lucky that Google ended up with something that seems to be somewhat sound even though they appear to be blind to anything beyond their own little ecosystem bubble. Facebook's Johannes Weiner had this to say about Google's blinders:

"This gives the impression that because the number of setups heavily using the page cache has reduced somewhat, its significance is waning as well. I don't think that's true. I think we'll continue to have mainstream workloads for which the page cache is significant."

by Johannes Weiner (Facebook) on April 14th, 2021

Google's Yu Zhao sent their first attempt at a new LRU Framework to the Linux kernel mailing list (LKML) one month ago. Kernel developer Jens Axboe was not impressed. Google has now sent their second (v2) attempt to the LKML with improvement based on his and other kernel developers input.

"The initial posting of this patchset did no better, in fact it did a bit worse. Performance dropped to the same levels and kswapd was using as much CPU as before, but on top of that we also got excessive swapping. Not at a high rate, but 5-10MB/sec continually.

I had some back and forths with Yu Zhao and tested a few new revisions, and the current series does much better in this regard. Performance still dips a bit when page cache fills, but not nearly as much, and kswapd is using less CPU than before."

by Jens Axboe (Facebook), April 13th, 2021.

The second version was better than the first, but there is still a long way to go before the Google-proposed Multigenerational LRU Framework becomes a part of any mainline Linux kernel. Several seasoned kernel developers are skeptical, and for good reason. Linux isn't just used on smartphones and thin notebooks running ChromeOS, it is used by all kinds of server applications such as web servers, database applications and a whole lot of other widely differing applications. The current LRU framework is tried and true, the Multigenerational LRU Framework is not.

Dave Chinner is one of the kernel developers who's not convinced.

"It's your job to convince us that it works, not the other way around. It's up to you to prove that your assertions are correct, not for us to prove they are false."

Chinner is also unimpressed with some of the the benchmarks done to showcase the new Multigenerational LRU Framework:

"All this tells us is that there was *less contention on the mapping tree lock*. It does not tell us why there was less contention.

You've handily omitted the kswapd profile, which is really the one of interest to the discussion here - how did the memory reclaim CPU usage profile also change at the same time?"

Chinner later added that:

"I don't think that profiles are going to give us the level of detail required to determine how this algorithm is improving performance. That would require careful instrumentation of the memory reclaim algorithms to demonstrate any significant change in behavior, and then to prove that it's a predictable, consistent improvement across all types of machines rather than just being a freak of interactions with a specific workload on specific hardware would need to be done."

Facebook's Johannes Weiner appears to be skeptical to the implementation as well as the motivation behind it.

"If we accept that the current two generations are not enough, how many should there be instead? Four? Ten?

What determines this? Is it the workload's access pattern? Or the memory size?

How do I know whether the number of generations I have chosen is right for my setup? How do I detect when the underlying factors changed and it no longer is?

How does it manifest if I have too few generations? What about too many?

What about systems that host a variety of workloads that come and go? Is there a generation number that will be good for any combination of workloads on the system as jobs come and go?

For a general purpose OS like Linux, it's nice to be *able* to tune to your specific requirements, but it's always bad to *have* to. Whatever we end up doing, there needs to be some reasonable default behavior that works acceptably for a broad range of workloads out of the box."

by Johannes Weiner (Facebook) on April 14th, 2021

Weiner's questions are good questions. It will take time before the kernel community works out just how the Multigenerational LRU Framework impacts the many workloads Linux-based systems encounter and it will take longer to work out what kind of defaults it should have.

We fully expect this to be one of those patch-sets that goes through eight or more revisions before it gets merged. It is almost certain that it won't be merged into Linux 5.13 when the merge window opens, or Linux 5.14 for that matter. This seems like something worth looking for when Linux 5.18 or 5.20 are released in the far-distant future, and it will likely be an option, not a default, if/when it is merged.

The Russians are aware of Googles LRU efforts.

Enable comment auto-refresher